Published on:

Apple Previews Next-Generation Vision Pro Persona and M5 Chip Architecture in Rare Technical Discussion

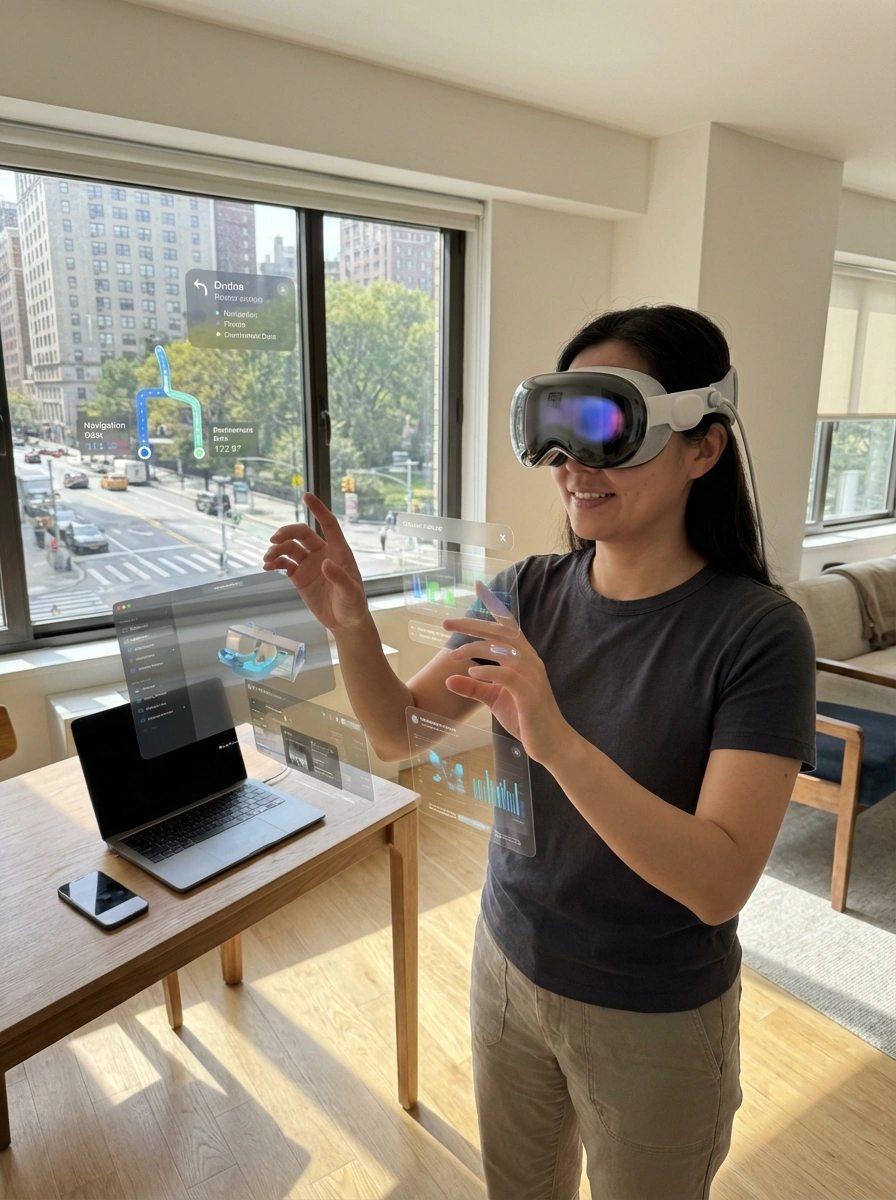

Apple has offered an unusually detailed look into the future of Vision Pro, revealing major updates to its Persona system, the role of the upcoming M5 chip, and the company’s broader direction in spatial computing and on-device AI.

The insights come from a rare technical exchange between media and two key leaders from the Vision Pro team, offering a clearer picture of how Apple is evolving Vision Pro into a long-term spatial computing platform.

Persona Takes a Major Leap with 3D Gaussian Splatting

Following the release of visionOS 2.0, users have noticed a significant improvement in Persona realism. At the core of this upgrade is a relatively new rendering technique known as 3D Gaussian Splatting (3DGS).

Unlike traditional computer graphics which rely on manually constructed meshes, 3DGS works by learning geometry directly from captured images. The system records video from multiple angles and infers the structure of a face as a collection of volumetric Gaussian elements—ellipsoid-shaped points with position, scale, and transparency.

Apple confirmed that Persona now uses a pure Gaussian-based approach, without hybrid mesh geometry, allowing for highly natural colour transitions and surface detail that meshes struggle to replicate.

From FaceTime Avatars to Digital Identity

Apple’s long-term vision for Persona extends beyond simple video calls. Persona is being developed as a digital representation of identity, integrated directly into three-dimensional environments.

The company also highlighted HUGS (Human Gaussian Splatting), an open-source project that expands the technique to full-body avatars. These representations can be rigged with skeletal animation, enabling immersive telepresence where participants appear as full-scale spatial avatars within each other’s real environments.

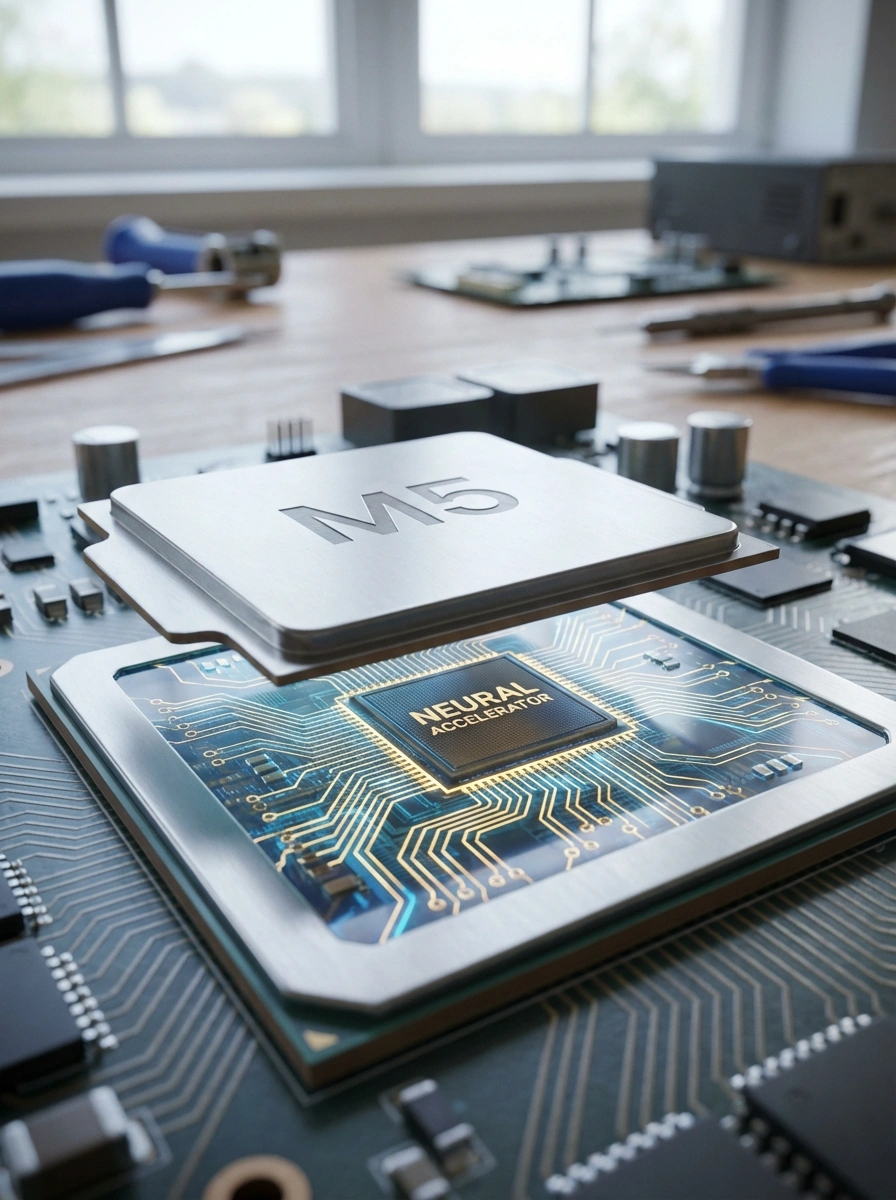

M5 Chip: A New GPU Architecture Built for AI + Graphics

The M5 chip delivers its most meaningful gains through architectural changes rather than raw scaling. For the first time, each GPU core includes a dedicated Neural Accelerator.

These GPU-level accelerators are designed specifically for AI-graphics fusion workloads, such as:

- AI denoising

- Video super-resolution

- Frame generation

- Advanced rendering effects

Previously, data had to move repeatedly between the GPU and NPU. With M5, these operations can be completed entirely within the GPU, reducing latency and improving efficiency for real-time spatial tasks.

Why Vision Pro Benefits Most from M5

While gains on standard laptops may be subtle, Vision Pro is the primary beneficiary of the M5 architecture. As a video see-through (VST) headset, it continuously runs AI-intensive tasks including:

- SLAM (Simultaneous Localisation and Mapping)

- Environmental understanding

- AI-based passthrough denoising

- Persona rendering

Nearly all of these workloads align precisely with M5’s AI-enhanced GPU design, ensuring a seamless and high-fidelity spatial experience.

Apple’s Broader AI Philosophy

Apple reiterated that its strategy focuses on device-centric intelligence—constructing persistent, personal world models that combine visual input, motion data, and spatial understanding.

Projects such as FastVLM, an open-source vision-language model, exemplify this direction, offering fast, low-power inference for real-time, context-aware intelligence. This forms a closed loop: the real world is vectorised, interpreted by AI, and projected back into immersive spatial experiences.

Technology, Perception, and the Future

Apple’s Vision Pro roadmap suggests a deeper ambition: to reshape how humans perceive and interact with reality through computation. By combining spatial AI, realistic digital embodiment, and purpose-built silicon, Apple is positioning Vision Pro as more than a headset—it is an experiment in how digital systems can augment human perception itself.