Published on:

Closed-Track ADAS Test Sparks Debate After Multiple Smart Cars Struggle With High-Speed Safety Scenarios

A newly released closed-track evaluation of navigation-assisted driving systems has reignited public debate over how reliable today’s “smart driving” features really are—especially when conditions become messy, unpredictable, and time-critical.

The test, published by Chinese auto media outlet Dongchedi, put 36 vehicles through a series of active-safety scenarios on a closed highway. The lineup covered a wide spread of popular domestic and international models, but the outcome—marked by repeated failures—has triggered a wave of concern.

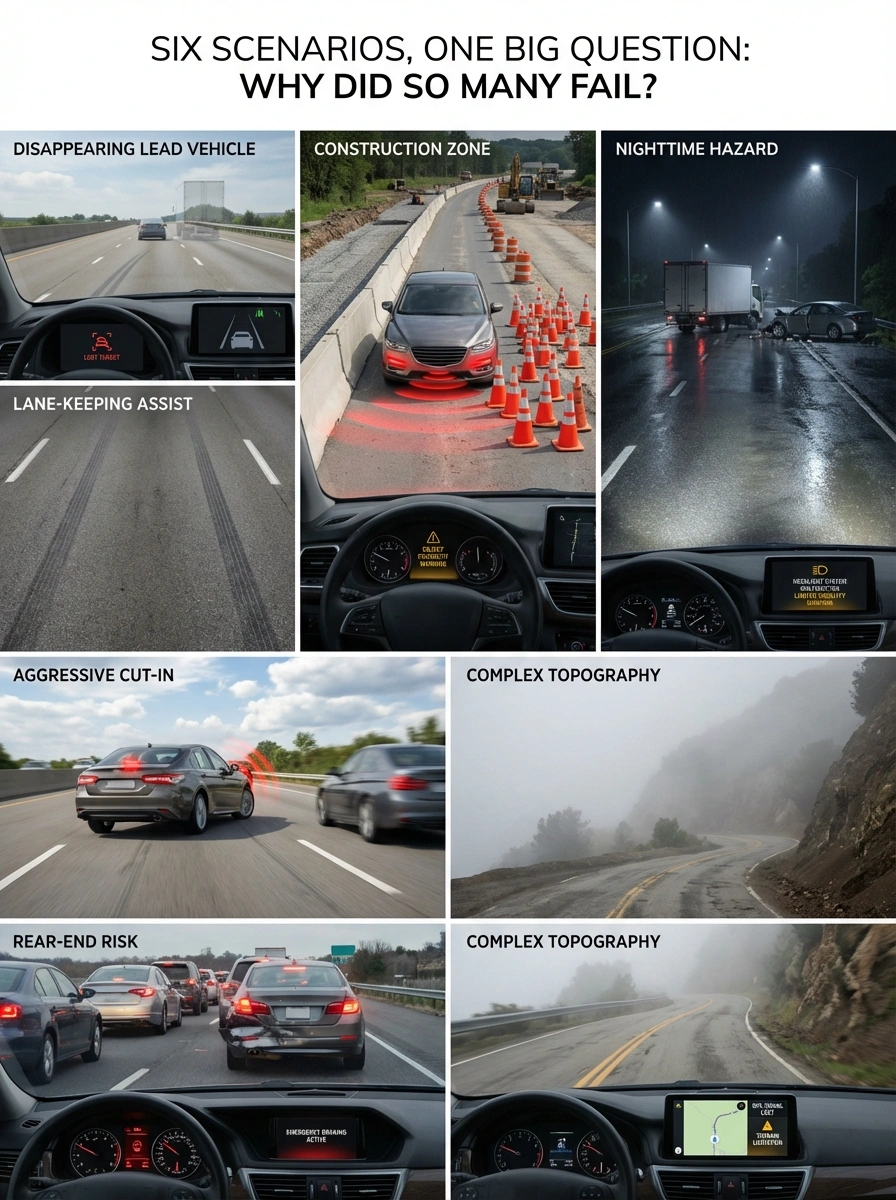

Six Scenarios, One Big Question: Why Did So Many Fail?

Vehicles were evaluated across six demanding active-safety situations:

- Disappearing Lead Vehicle: A sudden loss of tracking for the target ahead.

- Construction Zones: Scenarios with extremely short buffer distances.

- Nighttime Hazards: Blocked paths with unlit accident vehicles or stalled trucks.

- Aggressive Cut-ins: Daytime highway merging and high-speed maneuvers.

- Rear-end Risks: Sudden slowing or stopping of high-speed traffic.

Even in a controlled course, these scenarios introduced realistic constraints—limited visibility and complex topography—that overwhelmed systems that usually perform well in routine traffic.

Perception Looks Fine—Planning and Control May Be the Bottleneck

A key observation from the test is that many vehicles were able to "see" the hazards. The bigger weakness appears to be planning and control.

In modern “end-to-end” stacks, large neural networks translate sensor inputs into a planned trajectory. The critique is not that these models are useless, but that they can become unstable when encountering unfamiliar combinations of variables. The system recognizes a hazard but cannot reliably decide what to do next—brake, steer, or evade—under extreme pressure.

“The bigger weakness may lie in planning and control: the system recognizes a hazard but cannot reliably decide what to do next.”

The “Probability Problem” and Edge Case Training

Real driving cannot rely on “probability luck.” When risk is high, systems need consistent, deterministic behavior. However, extreme collision scenarios are rare, making them difficult to "learn" from real-world data alone.

To compensate, automakers are using:

- Cloud-based Simulations: Creating synthetic “worst-case” scenarios at scale.

- Generative Training: Distilling synthetic edge-case knowledge back into the vehicle-side model.

While these pipelines are evolving, the test results suggest many are still in the early stages of producing consistently safe behavior across extreme conditions.

Regulators Re-Emphasize: Drivers Remain Responsible

The controversy arrives alongside renewed messaging from authorities emphasizing that these systems are driver-assistance, not self-driving.

Authorities in China’s traffic management system have stressed that drivers remain the responsible party. New technology-ethics guidelines also urge clearer communication to consumers to prevent the misunderstanding and misuse of advanced driver-assistance functions.

What This Means for Consumers

The headline takeaway is that driver assistance is still—by design—assistance. Even if a system works impressively in many daily scenarios, rare highway events can stack constraints in ways that quickly exceed what today’s models handle reliably.

For drivers, the message is clear: treat these systems as tools, not replacements for attention. The next leap forward in safety won't come from smoother lane-keeping, but from robust emergency planning and a deeper public understanding of technological limits.