Published on:

Tracing the Rise of Artificial Intelligence: From Human Curiosity to a Cognitive Revolution

“As a journalist, I have always been deeply curious about the world.” With these words, Chinese media figure Yang Lan opens the documentary Exploring Artificial Intelligence, framing a journey driven by questions that increasingly define our era: Are machines becoming smarter than humans? Will artificial intelligence one day replace us? And how far can technology extend the boundaries of human intelligence?

These questions gained global urgency in 2016, a year widely regarded as the breakout moment for artificial intelligence. From big data and cloud computing to deep learning and autonomous systems, AI rapidly moved from laboratories into public consciousness.

One defining event was the historic match in which AlphaGo defeated world champion Lee Sedol, symbolizing a shift in how humans perceived machine intelligence.

Language, Cognition, and the Machine Mind

Human intelligence evolved through language. Around 70,000 years ago, the development of complex speech systems allowed humans to describe their environment, exchange abstract ideas, and build societies. This “cognitive revolution” fundamentally reshaped civilization.

The documentary asks a parallel question: how do machines understand language?

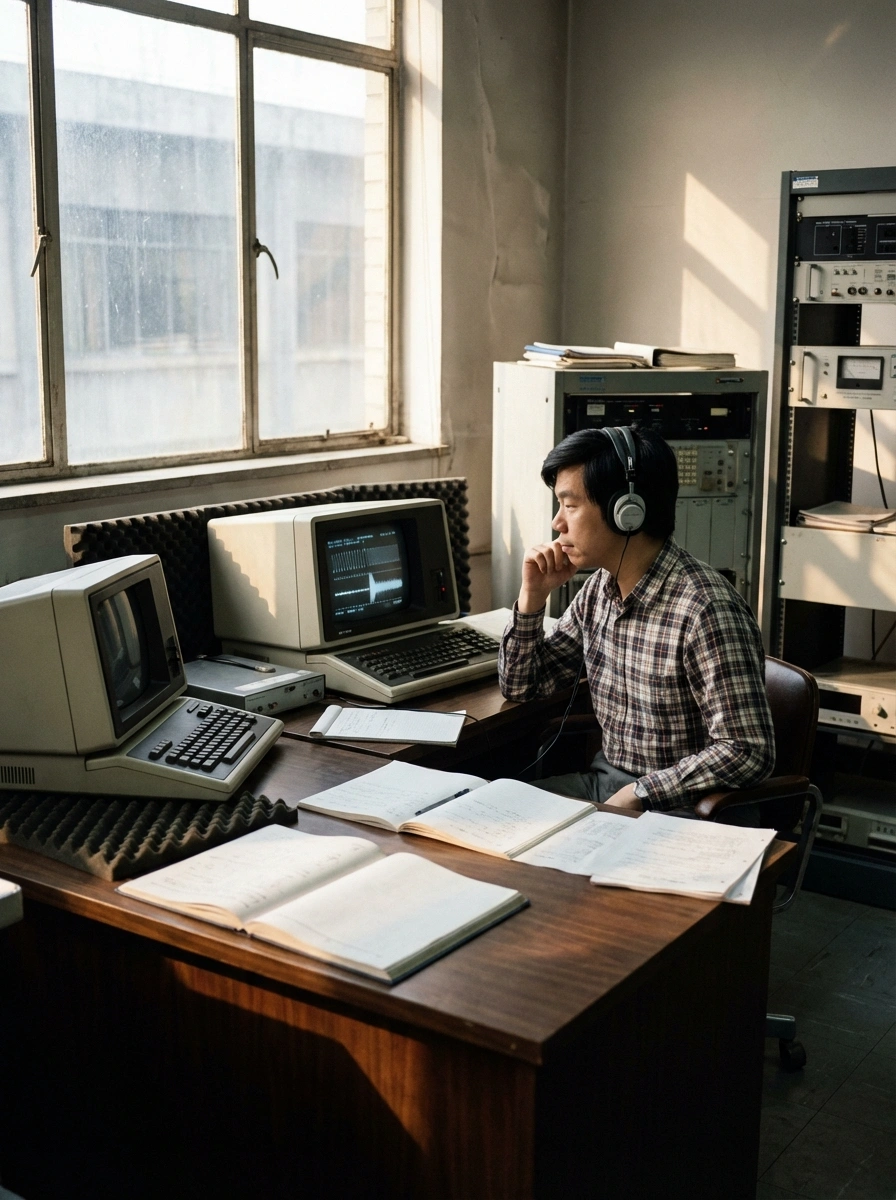

Early attempts were modest. In 1952, scientists at Bell Labs taught machines to recognize ten spoken English digits, naming the system “Audrey.” While groundbreaking, it remained far from true natural language understanding. Progress was incremental and often constrained by limited data and computing power.

Progress was incremental and often constrained by limited data and computing power.

A major turning point came in the 1980s, when Kai-Fu Lee began speech recognition research at Carnegie Mellon University. His work introduced systems capable of understanding continuous speech from multiple speakers—an essential step toward natural human-computer interaction. For the first time, machines could process spoken language in a way that resembled real dialogue rather than isolated commands.

From Rule-Based Systems to Deep Learning

Despite these advances, speech and language systems struggled for decades. The breakthrough arrived in the mid-2000s, driven by a new paradigm: deep learning.

In 2006, Geoffrey Hinton published influential research on deep neural networks, drawing inspiration from the structure of the human brain. His work emphasized scale—larger models, more layers, and vastly more data. This approach resonated with researchers such as Deng Li, who demonstrated that when paired with large datasets and modern computing power, error rates in speech recognition could drop dramatically, sometimes by more than 20 percent in early experiments.

What had held neural networks back in the 1990s was not flawed theory, but insufficient data and computational resources. As the internet generated massive datasets and processing power increased, deep learning suddenly flourished. Problems that once seemed intractable began to disappear.

Machines Enter the Physical World

The documentary also explores how artificial intelligence moves beyond software into the physical environment. At Stanford University’s AI laboratory, the humanoid-like robot PR2 performs tasks such as navigating corridors, using elevators, and purchasing coffee for researchers.

Its design is not meant to imitate humans, but to efficiently interact with a human-centered world—seeing, grasping, and applying controlled force.

This reflects a broader shift in robotics: machines are no longer confined to factories. They are learning to coexist with people, adapting to complex, unstructured environments.